8 Advanced parallelization - Deep Learning with JAX

Por um escritor misterioso

Descrição

Using easy-to-revise parallelism with xmap() · Compiling and automatically partitioning functions with pjit() · Using tensor sharding to achieve parallelization with XLA · Running code in multi-host configurations

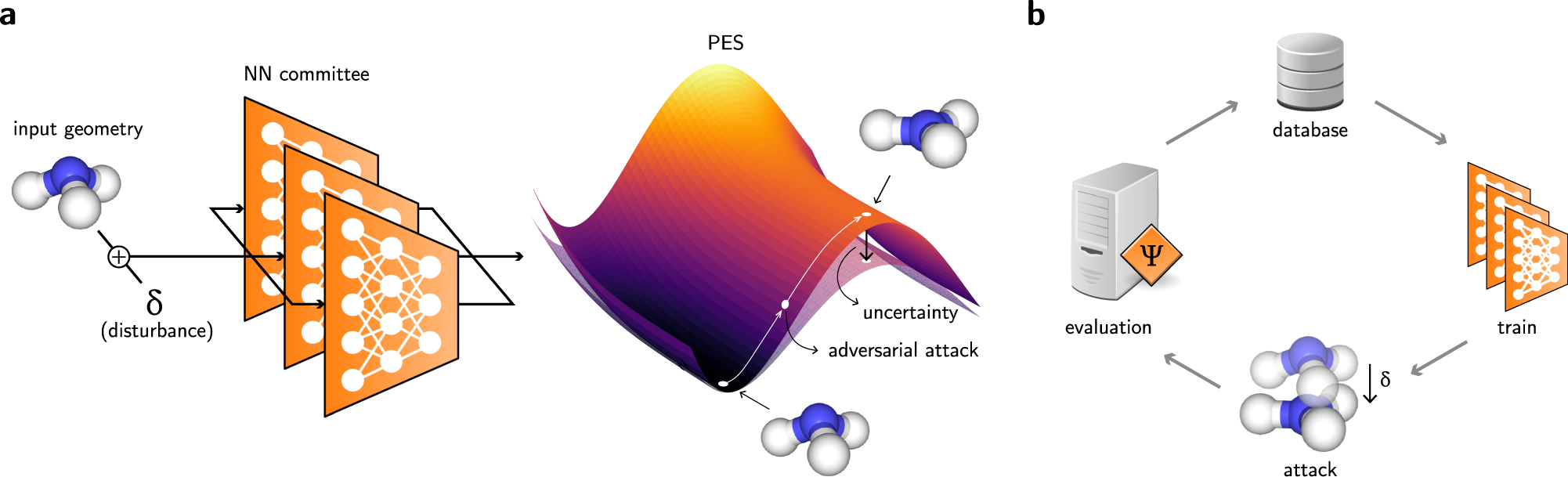

Differentiable sampling of molecular geometries with uncertainty

Top 11 Machine Learning Software - Learn before you regret

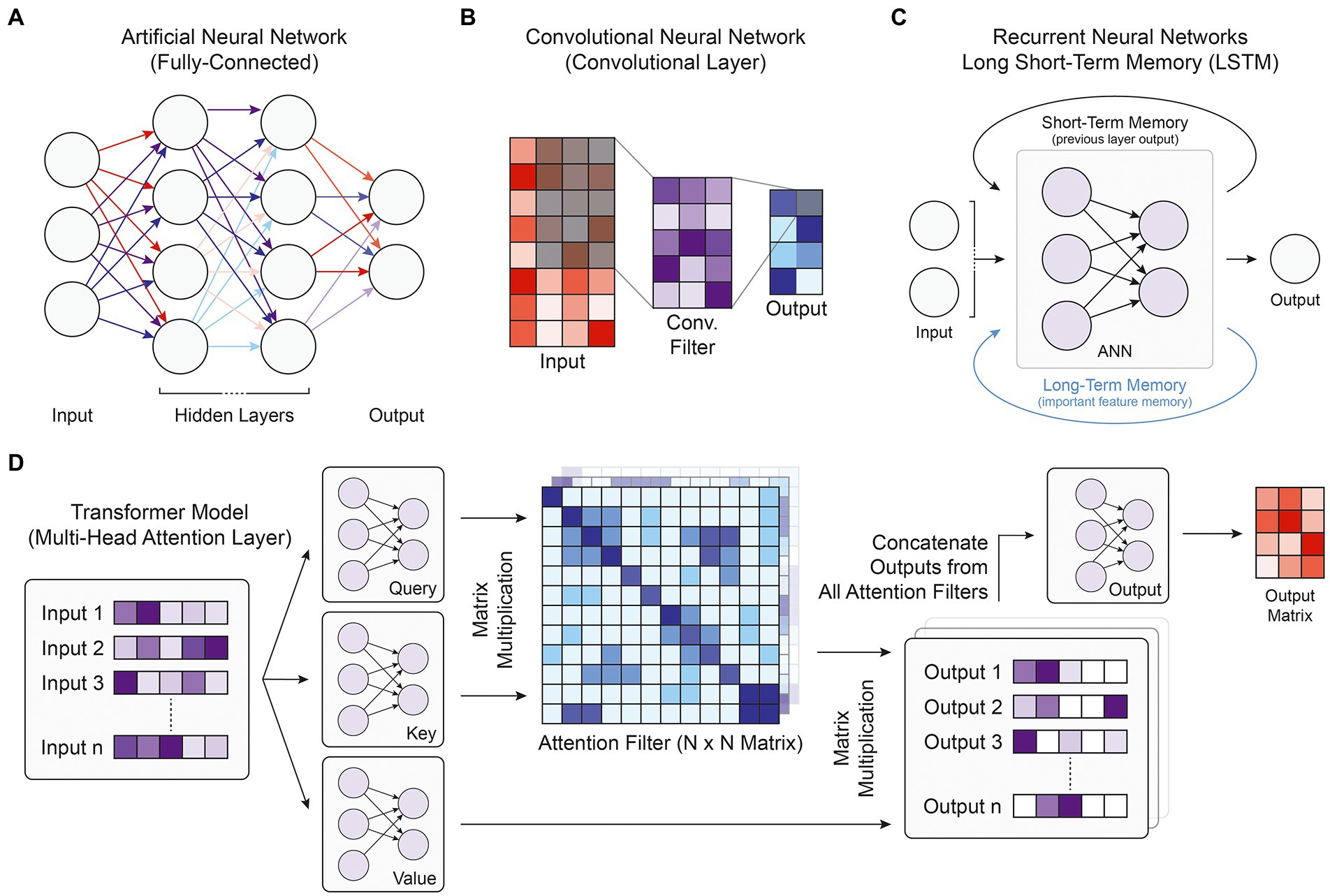

Frontiers Deep learning approaches for noncoding variant

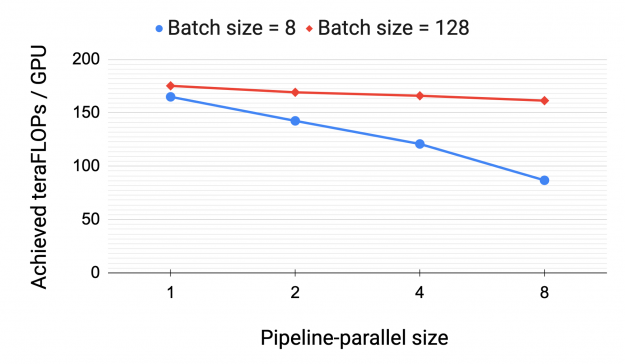

Scaling Language Model Training to a Trillion Parameters Using

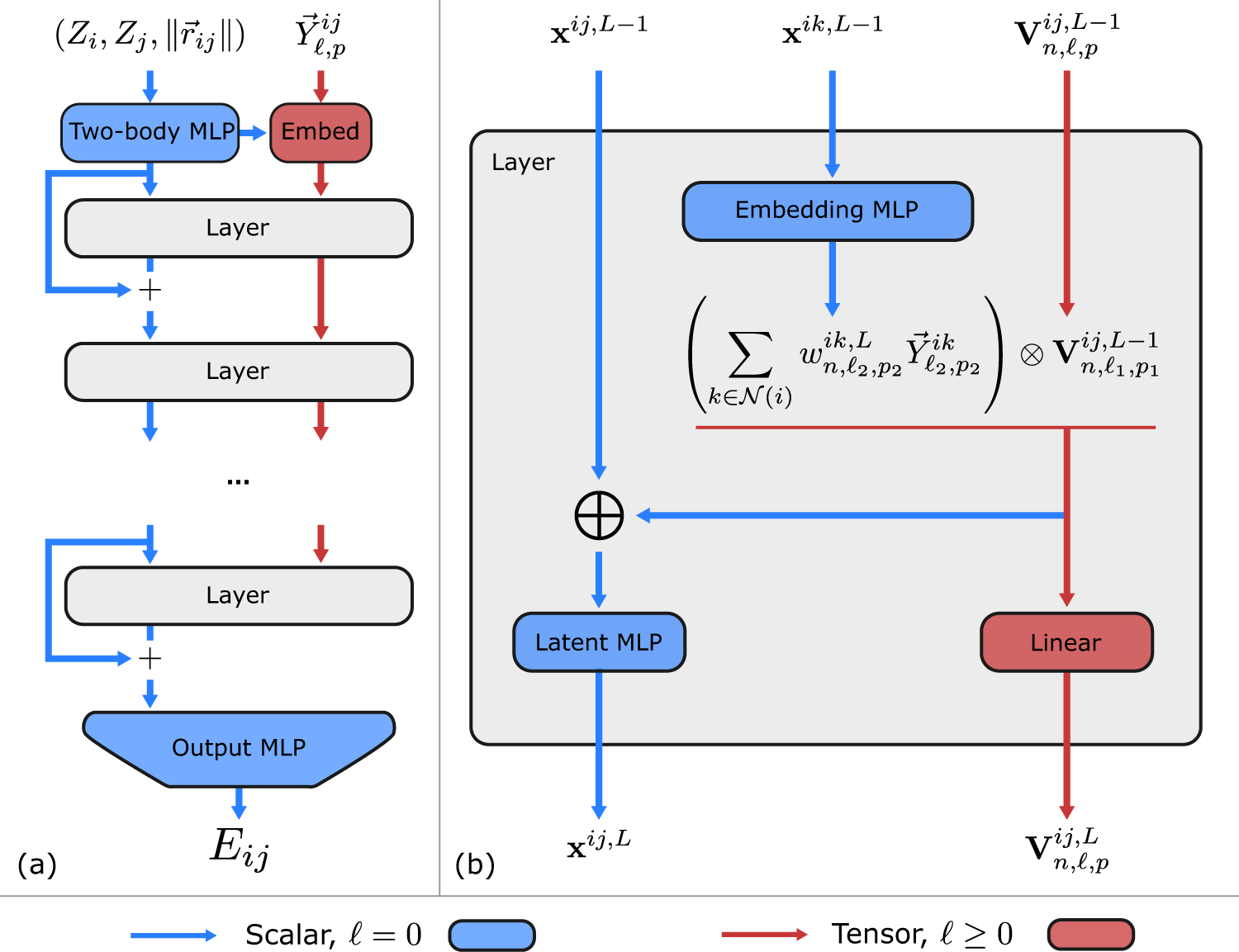

Learning local equivariant representations for large-scale

Why You Should (or Shouldn't) be Using Google's JAX in 2023

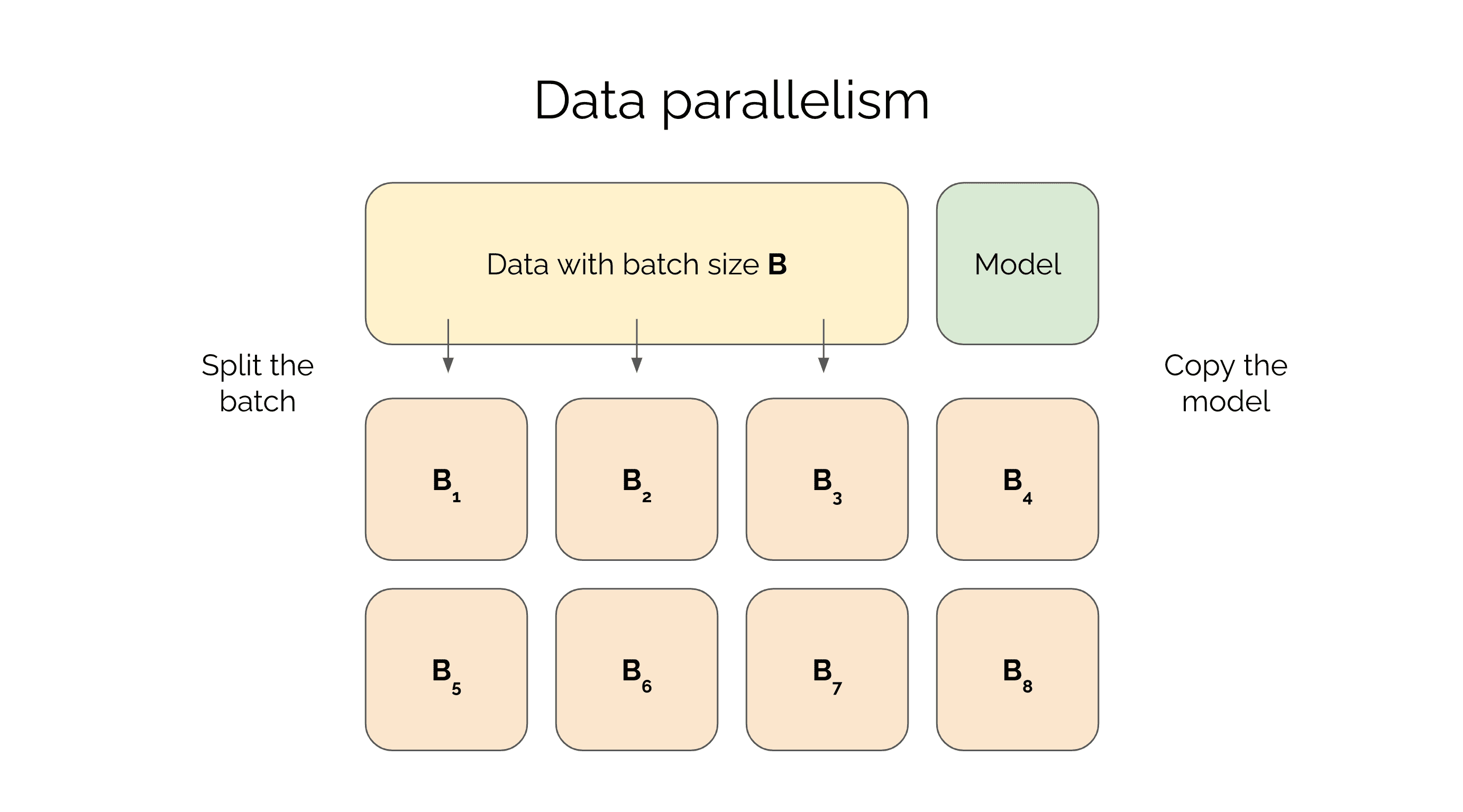

Training Deep Networks with Data Parallelism in Jax

Writing a Training Loop in JAX and Flax

Learn JAX in 2023: Part 2 - grad, jit, vmap, and pmap

de

por adulto (o preço varia de acordo com o tamanho do grupo)